Lazarus AI Unveils Applied Intelligence Engine to Move Enterprises Beyond Pilot Purgatory

As enterprises demand production-grade AI, the Lazarus Applied Intelligence Engine converts 90% of AI pilots into value-generating deployments.

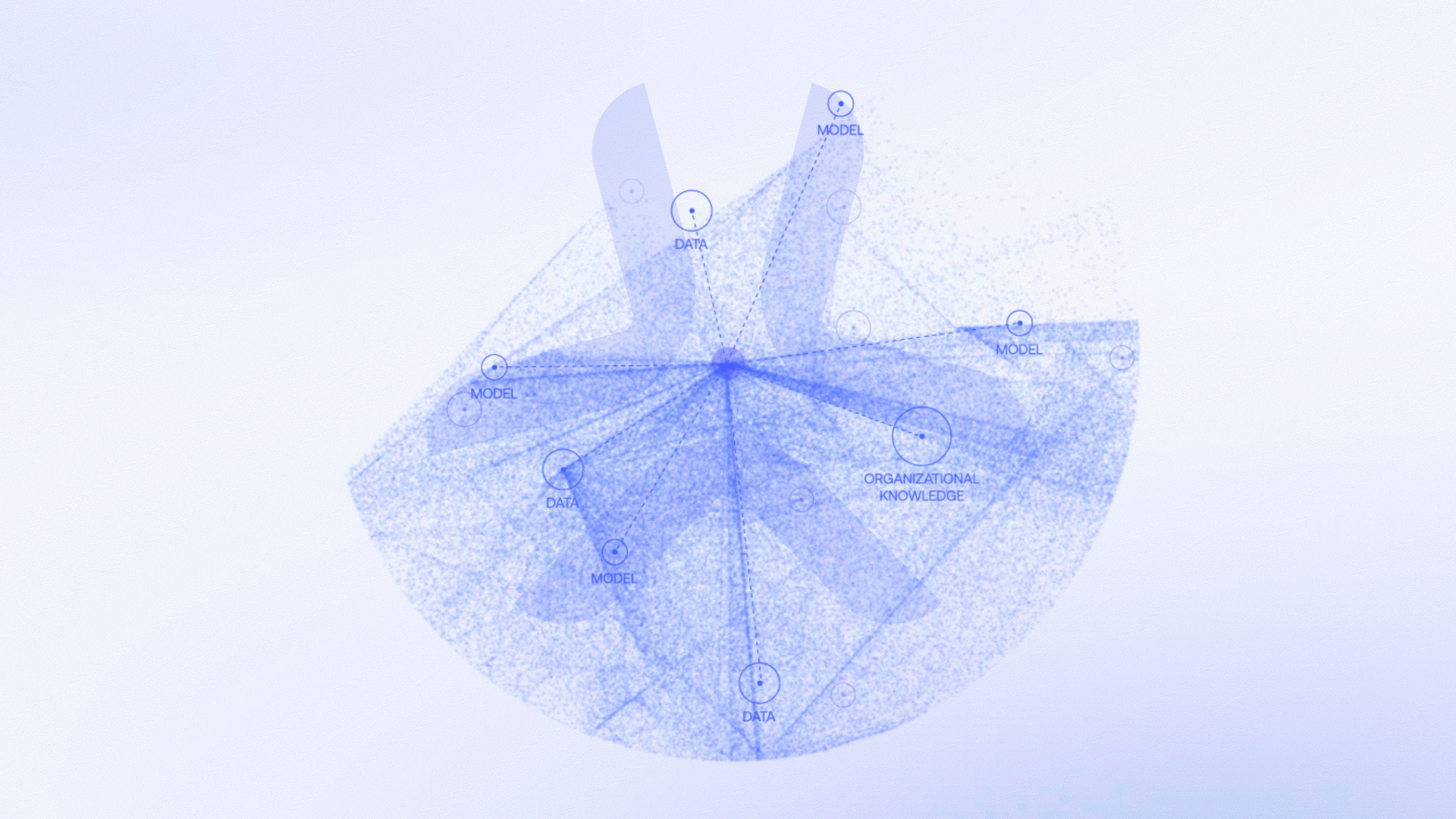

Lazarus AI today introduced its flagship Applied Intelligence Engine (AIE), a model-independent infrastructure designed to close the gap between AI experimentation and production-grade deployment, giving regulated enterprises the reliability, control, and speed to drive AI beyond the pilot stage. Working with any foundation model, Lazarus AIE enables insurance, healthcare, government, fintech, and other enterprises to turn data into meaningful insights to workflows, technology stacks, and processes while improving operational efficiency, mitigating risk, and driving growth.

“The defining issue in enterprise AI is no longer access to intelligence, but the ability to apply that intelligence to the right problems, transparently and at scale,” said Alex Panait, CEO of Lazarus AI. “Too many promising pilots still take too long to become resilient, production-grade systems that deliver measurable business outcomes. Our Applied Intelligence Engine addresses the operational, regulatory, and infrastructure barriers that slow adoption in regulated industries. With our proprietary integration of context engineering, prompt engineering, and problem engineering embedded in our AIE, more than 90% of Lazarus pilots translate into real-world deployment and measurable results.”

Despite massive enterprise investment in AI potential, MIT’s 2025 study shows that 95% of generative AI pilots fail to deliver measurable outcomes, with performance and reliability inhibited by long training times, output unpredictability, hallucination risks, vendor lock-in, and rapidly changing models and versions. With its Applied Intelligence Engine, Lazarus solves the pain points of deploying AI technologies to deliver real-world value. The AIE is intelligence infrastructure that can turn any enterprise’s trapped data and most complex problems into meaningful actions, insights, and outcomes targeting their unique operational and data challenges.

The Lazarus AIE helps enterprises make better decisions faster and put AI systems into production that create measurable competitive advantage, as well as enabling organizations to scale AI-driven use cases fast and efficiently across workflows. Key AIE modules include:

- Task Execution: A modular, configurable execution pipeline automates assembly of the right AI stack to execute per use case, while system constraints (prompting, context engineering, and structured generation) ensure deterministic, production-grade outputs that are consistent, auditable, and reliable at scale.

- Knowledge Augmentation: Unified retrieval across data sources (conventional databases, vectorized data, associated knowledge graphs, and external/web sources) brings the right knowledge into each AI-led task, while relevance and grounding controls curate and constrain context—by source, visibility, and freshness—to improve accuracy and eliminate model hallucinations.

- Automated Orchestration: End-to-end, predictable agentic automation chains tasks across workflows with branching, retries, memory, and human-in-the-loop escalation so processes run continuously rather than as isolated prompts, while seamlessly integrating with Task Execution and Knowledge Automation capabilities enables coordinated execution across AI systems.

Lazarus enables teams to analyze more data, make decisions faster, and complete complex processes in a fraction of the time. Expertise across healthcare to financial services to government and defense, and others, is codified into each architecture, enabling Lazarus to advance projects quickly and with less risk, delivering value at scale. Successful integration examples include:

- For a cloud service provider in healthcare, the AIE cut the review cycle for urgent prior authorizations from days to just hours, showing an over 75% improvement rate.

- AIE has also been applied at scale in M&A use cases to successfully recognize tail risks that would otherwise remain unidentified with traditional portfolio sampling methods, with one reinsurance customer mitigating a $30M hidden exposure.

- One enterprise client expanded its AIE integration to more than 23 use cases in under six months without ripping and replacing existing infrastructure or onboarding another vendor.

Designed for the complexity of regulated industries, the AIE delivers value directly into workflows. Unlike foundation models that require significant internal lift and ongoing maintenance to keep up or wrapper solutions that lack industry expertise, the AIE abstracts away the complexities and chaos of AI so enterprises can focus on strategic outcomes.

About Lazarus AI

Lazarus AI is an AI engine company helping enterprises deploy artificial intelligence safely, reliably, and at scale. Its flagship Applied Intelligence Engine (AIE) enables organizations to move beyond experimentation and operationalize AI in ways that drive measurable business value and lasting competitive advantage. Model-agnostic, modular, and configurable, Lazarus AI’s technologies help insurers, healthcare organizations, government agencies, financial institutions, and other complex enterprises put AI into production with greater control, reliability, and impact. Headquartered in Boston, Lazarus AI serves customers across North America, APAC, and Europe through a team of experienced engineers, domain experts, and leading AI researchers.

Lazarus AI

lazarus@bigfishpr.com